HUST Operating System Lab3: Memory Management

Operating System Lab3: Memory Management

Experiment Objectives

Understand page replacement algorithm principles and write programs to demonstrate page replacement algorithms.

Verify the mechanism of Linux virtual address to physical address conversion

Understand and verify the principle of program execution locality.

Understand and verify the page fault handling process.

Experiment Content

Write a 2D array traversal program in Win/Linux to understand the principle of locality.

Simulate and implement OPT, FIFO, or LRU page replacement algorithms in Windows/Linux.

Study and modify Linux kernel’s page fault handling function do_no_page (new version should be handle_mm_fault) or page frame allocation function get_free_page, and use printk to print debug information. Note: Kernel compilation is required. Recommended: Ubuntu Kylin or Kylin system.

Use /proc/pid/pagemap technology in Linux to calculate physical addresses corresponding to virtual addresses of variables or functions. Recommended: Ubuntu Kylin or Kylin system.

Task 1: Write a 2D Array Traversal Program to Understand the Principle of Program Locality

Task Requirements

Make the array as large as possible, and try changing the array size and the order of inner and outer loops. For example, change from [2048] X [2048] to [10240] x [20480], and observe their traversal efficiency.

Observe their page fault counts in Task Manager or using the /proc file system.

Code Implementation

1 |

|

32-bit Program Compilation

If you directly add -m32 for compilation, it will report an error:

Because 32-bit libraries are missing, you need to install 32-bit libraries:

1 | sudo dpkg --add-architecture i386 # Add 32-bit architecture |

32-bit and 64-bit Program Execution Results

Using g++ to compile 32-bit and 64-bit executable files test_32 and test_64 respectively, the execution results are as follows:

Observation and Analysis of Phenomena

- Execution time:

- In smaller matrices (2048x2048), 64-bit programs have slightly less execution time than 32-bit programs.

- In larger matrices (10240x20480), 64-bit programs are significantly faster than 32-bit programs in row-major traversal, but in column-major traversal, the time difference between the two is not significant.

- Page faults:

- For both programs (32-bit and 64-bit), under the same matrix size and traversal method, the number of page faults is almost identical.

Execution time differences:

64-bit programs can typically handle large datasets more efficiently because they can utilize larger address spaces and generally more efficient data processing capabilities (e.g., larger registers and better memory management). This explains why 64-bit programs are faster than 32-bit programs in row-major traversal when processing larger matrices. For column-major traversal, due to memory access patterns causing frequent cache misses, this may somewhat offset the advantages of 64-bit architecture, resulting in similar performance between the two in large matrix column-major traversal.

Page fault similarity:

The number of page faults mainly depends on the program’s memory access patterns and the operating system’s page management strategy, rather than the program’s bit width. Therefore, even for 32-bit and 64-bit programs, if they perform the same memory access operations, the number of page faults may be very similar. It seems more dependent on memory access patterns and the operating system’s memory management.

But I actually have a question: intuitively, column-major traversal should produce more page faults than row-major traversal, because in column-major traversal, each access address is continuous, while in row-major traversal, each access address is discontinuous. But in reality, the number of page faults for both is almost the same.

GPT’s answer:

- Memory paging and access patterns:

- In modern computer systems, physical memory is managed in pages. If a matrix’s rows or columns span multiple memory pages, then both row-major and column-major traversal may cause page faults.

- In row-major traversal, if each row of the matrix spans multiple pages, then even if access is continuous, page faults may occur when accessing new rows.

- In column-major traversal, although each jump to a new column may touch new memory pages, if the column data is small and can be completely contained within a single or few memory pages, the occurrence of page faults may not be much more than in row-major traversal.

- Cache and prefetch strategies:

- Modern processors have advanced cache and prefetch mechanisms. These mechanisms may somewhat reduce the expected number of page faults in column-major traversal.

- Processors may be able to predict upcoming memory access patterns and preload data from main memory to cache, so even column-major access may not immediately cause page faults.

- Operating system memory management:

- The operating system may adopt some strategies in memory management, such as lazy allocation, which may cause the actual number of page faults to differ from expectations.

- The operating system may maintain a certain number of free page pools, so in early memory access, the occurrence rate of page faults may be lower.

- Physical memory layout of matrices:

- The actual layout of matrices in physical memory may also affect the occurrence of page faults. For example, if some parts of the matrix have already been loaded into memory for other reasons, this may reduce page faults in specific traversal methods.

- Overall system memory load:

- If other processes running on the system consume a lot of memory, this may affect the page fault behavior of your program. The overall memory load and availability of the system may cause different page fault behaviors for the same operation at different time points.

Task 2: Simulate and Implement OPT, FIFO, or LRU Page Replacement Algorithms

Task Requirements

Use array traversal operations to simulate program instruction execution;

Use one large array A (e.g., 2400 elements) to simulate a process, with random numbers in the array. When each element is accessed, use printf to print it out, simulating instruction execution. The size of array A must be an integer multiple of the set page size (e.g., 10 elements or 16 elements, etc.).

Use 3-8 small arrays (e.g., array B, array C, array D, etc.) to simulate allocated page frames. The size of small arrays equals the page size (e.g., 10 instruction sizes, i.e., 10 elements). The small arrays contain copies of the corresponding page content from the large array (build a page table separately to describe the relationship between pages of the large array and small array indices).

Access array A in different orders, which can be: sequential, jump, branch, loop, or random. Build access orders yourself. Different orders also reflect program locality to some extent.

The access order of the large array can be defined using the rand() function to simulate instruction access sequences corresponding to the access order of large array A. Then transform the instruction sequence into corresponding page address streams and count “page faults” for different page replacement algorithms. Page faults occur when the corresponding “page” is not loaded into small arrays (e.g., array B, array C, array D, etc.).

In the experiment, page size, number of page frames, access order, and replacement algorithm should all be adjustable.

Code Implementation

1 |

|

Program Execution Results

This is the original output example, but for better demonstration, I made some modifications to the program and commented out some output.

OPT

FIFO

LRU

Since the OPT algorithm needs to know the future page access order, after selecting the OPT algorithm, there is no option 5=random access.

Task 3: Modify Linux Kernel Page Fault Handling Function to Print Debug Information

Task Requirements

Write 2 applications similar to hello world or simple for loops as test targets.

Add debug information using printk in Linux kernel’s page fault handling function do_no_page() or similar functions (function names vary across versions), print page fault information for specific processes (using program name as filter condition), and count page faults.

You can also add debug information using printk in Linux kernel’s physical page frame allocation function get_free_page() or similar functions (function names vary across versions), print information about new page frame allocation during specific process execution (using program name as filter condition), and count related information.

Modify Kernel Source Code

Add Code ./mm/memory.c

1 | // Add two system calls for program control, one to set target program name, one to get target program's page fault count |

1 | // Add debug information in do_no_page function |

System

Call Function Declaration ./include/linux/syscalls.h

1 | // Add declarations for two system calls |

System Call

ID ./arch/x86/entry/syscalls/syscall_64.tbl

1 | // Add system call numbers for two system calls |

System

Call ID Declaration

./include/uapi/asm-generic/unistd.h

1 | // Add declarations for two system calls |

Write Test Code After Kernel Compilation

The compilation will report an error as follows:

Initially I didn’t know what this error meant, and after modifying

for a long time without effect, I later added

#include <linux/syscalls.h> and it worked. This means

that if you define system calls, you must include this header file. But

the error message is not very clear, which caused me to waste a lot of

time.

Below is test1.c, test2.c just removes the newly added system calls. This is to test that the debug information is controllable.

1 |

|

Program Execution Results

As shown in the figure, only the page fault count for the test1 program was printed, while the page fault count for the test2 program was not printed.

Task 4: Calculate VA to PA Mapping using /proc/pid/pagemap in Linux

Task Requirements

Linux’s /proc/pid/pagemap file allows users to view physical addresses and other related information of current process virtual pages. Each virtual page contains a 64-bit value, pay attention to analyzing the 64-bit information.

Get the full name of the current process’s pagemap file

1

2

3

4

5

6

7

8

9// Example code from ppt

pid_t pid = getpid();

sprintf(buf, "%d", pid);

strcpy(filename, "/proc/");

strcat(filename, buf);

strcat(filename, "/pagemap");

// Can be done in one step

sprintf(filename, "/proc/%d/pagemap", pid);Can output virtual addresses, page numbers, physical page frame numbers, physical addresses and other information of one or more global variables or custom functions in the process.

Thinking:

How to extend the experiment (write a general function) to show the physical address corresponding to a specified virtual address of a specified process.

How to extend the experiment to verify that different processes’ shared libraries (e.g., some known, commonly called *.so libraries) have the same physical address.

Code Implementation

program.c implements output of virtual addresses, page numbers, physical page frame numbers, physical addresses and other information of one or more global variables or custom functions.

1 |

|

va2pa.c implements output of physical address corresponding to a specified virtual address of a specified process.

1 |

|

test.c is a test program used to verify that different processes’ shared libraries have the same physical address. For example, the printf function in this program is in the libc.so shared library.

1 |

|

Program Execution Results

Must run with sudo, otherwise this file should not have permission to open

program.c

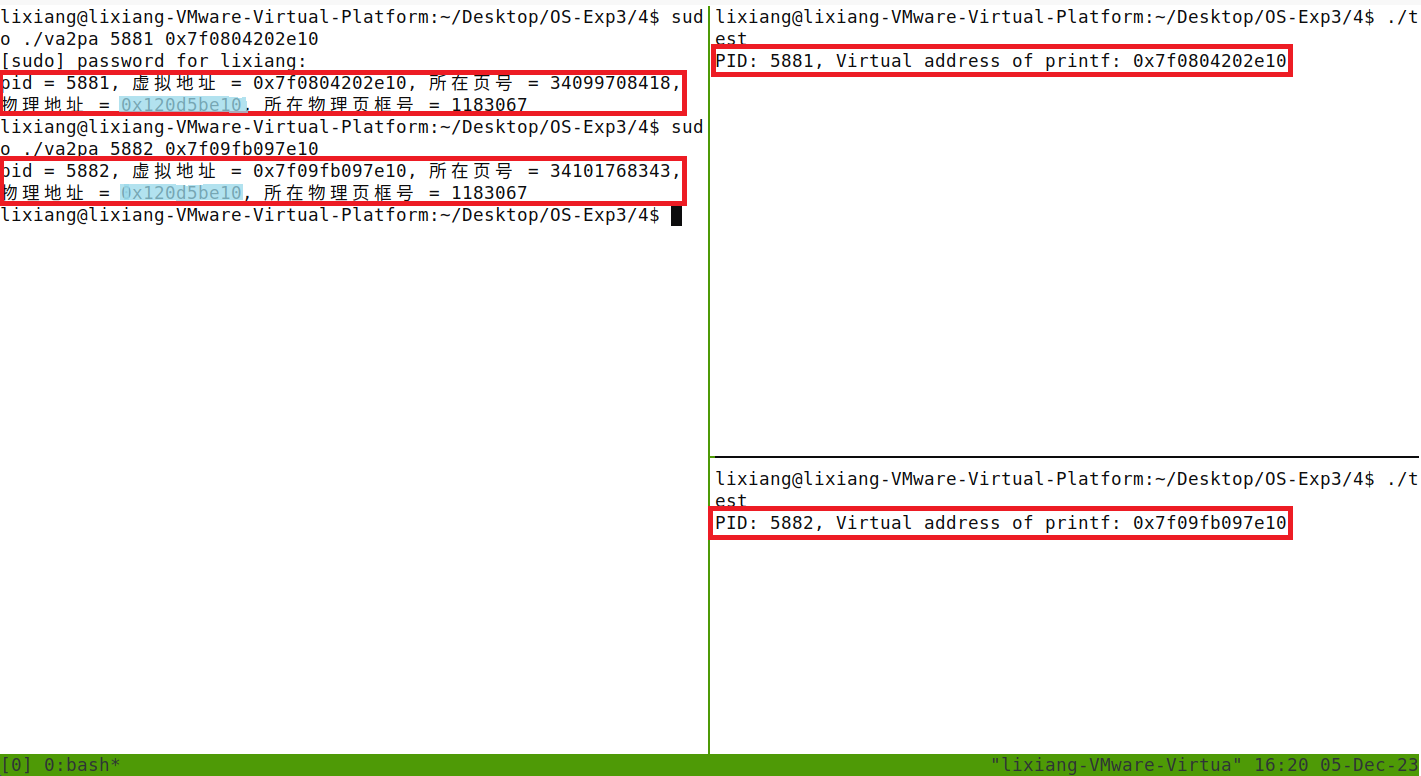

va2pa.c && test.c

Start two test processes, output the virtual addresses of the printf function respectively. va2pa.c calculates the physical addresses corresponding to the virtual addresses of the printf function for both processes.

The two red boxes on the right print the pid of the currently running test program and the virtual address of the printf function, then use the process number and virtual address as parameters for va2pa.c to calculate the physical address. The results are shown in the two boxes on the left. The physical addresses corresponding to the virtual addresses of the printf function for both processes (marked with blue highlighter) are the same, proving that different processes’ shared libraries have the same physical address.